The number and complexity of quality metrics within healthcare continues to expand, many of which are used to compare performance between hospitals, systems, and/or clinicians. To make these comparisons fair, many quality reporting agencies attempt to “risk stratify” these metrics, so as not to penalize those caring for higher complexity patients. Although laudable, these attempts also increase the complexity of the data and may reduce the ability of clinicians to understand and analyze quality performance.

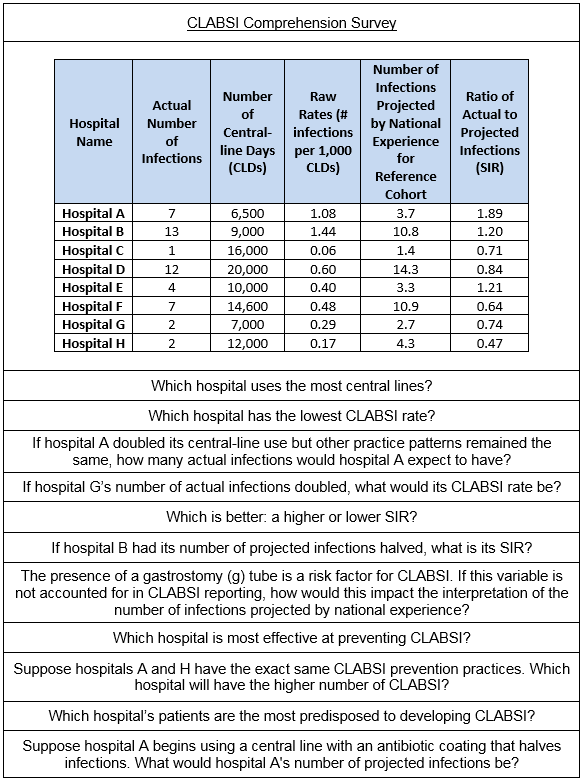

A recent article in the Journal of Hospital Medicine explores clinicians’ understanding of quality metrics using central line associated bloodstream infections (CLABSIs) as an example. The investigators used a unique Twitter-based survey to explore clinicians’ interpretation of basic concepts in public-reported CLABSI rates and ratios.

I recently caught up with the lead author, Dr. Sushant Govindan, to better understand his team’s research and its implications for quality reporting. Dr. Govindan is a Pulmonary-Critical Care fellow in the Department of Medicine at the University of Michigan Health System, as well as a Fellow at the Institute for Health Policy and Innovation at University of Michigan.

Briefly summarize what you found from your research.

Our team performed a survey amongst a convenience sample of clinicians (doctors, nurses, and public health professionals). We wanted to get a preliminary sense of whether clinicians actually understand basic quality metrics. We cognitively tested several questions to get down to the 11-question survey. The two main takeaways from the research were:

- Clinicians probably do not understand quality metrics as well as we assume.

- The overall level of low performance was primarily driven by not understanding risk adjustments.

Tell us more about the rationale behind your survey methodology.

Our team chose a Twitter-based sampling frame because it was novel and allowed for rapid sampling. We used a series of solicitation tweets and a clinician jargon (“weed out”) question to ensure we recruited knowledgeable clinicians. The tweet was linked to a user-friendly Survey Monkey form.

What would you recommend to hospitalists who are yearning to fully understand their local quality metrics and how they compare to others?

First, this is complex data. Hospitalists should not hesitate to ask for clarifications from their leadership, particularly when interpreting and trying to gain actionable feedback. My hypothesis is that most people are not exposed to this data as often as they would like, and when they are, it is presented in ways that may be confusing. I would recommend that hospitalists talk with their leaders about more effective ways to deliver and format the data to make it is more understandable and engaging.

How did you become interested in quality metric comprehension?

I have long been interested in clinician behavior and what motivates us to change our behavior. There is substantial literature on patient behavior and decision-making, but this seems to be lacking when assessing clinician behavior and decision-making. It is not well-known how clinicians view data and what they do with it cognitively. We spend billions of dollars developing quality metrics, and we are incentivizing performance around them. Yet, we really don’t understand the best ways to deliver and display them in a way that engages clinicians.

What does your work suggest is needed for future research, education, or practice?

We are replicating this right now in an expert population to gain more understanding on the topic. I would love to better understand if there are different infographic formats that would improve clinician comprehension of quality metric data, and subsequently change their perception of the reliability of the data. The ultimate goal is to understand the factors that motivate clinician behavior change in response to feedback information.

A special thanks to Dr. Govindan for spending a few minutes with us to further delve into his published work on how clinicians understand quality metrics; we look forward to learning more about his future work on how to improve comprehension of these metrics within the healthcare industry.

Leave A Comment