Let me get the Shameless Commerce portion of this post out of the way: the second  edition of my book, Understanding Patient Safety, was published this month by McGraw-Hill. I hope you’ll consider picking up a copy.

edition of my book, Understanding Patient Safety, was published this month by McGraw-Hill. I hope you’ll consider picking up a copy.

That done, I’ll turn to something more interesting: the rapid evolution of the safety field, as seen through the lens of writing the new edition, which I did last fall while on sabbatical in London. When I sat down to update the book (whose first edition I wrote in 2007), I anticipated just a little tweaking here and freshening up there. But writing a new edition of a book is like observing your kids through the eyes of a person who hasn’t seen them for years and notices the profound changes you missed. To stretch the analogy, updating Understanding Patient Safety convinced me that while the safety field is still maturing, it has advanced well beyond the awkward years of adolescence.

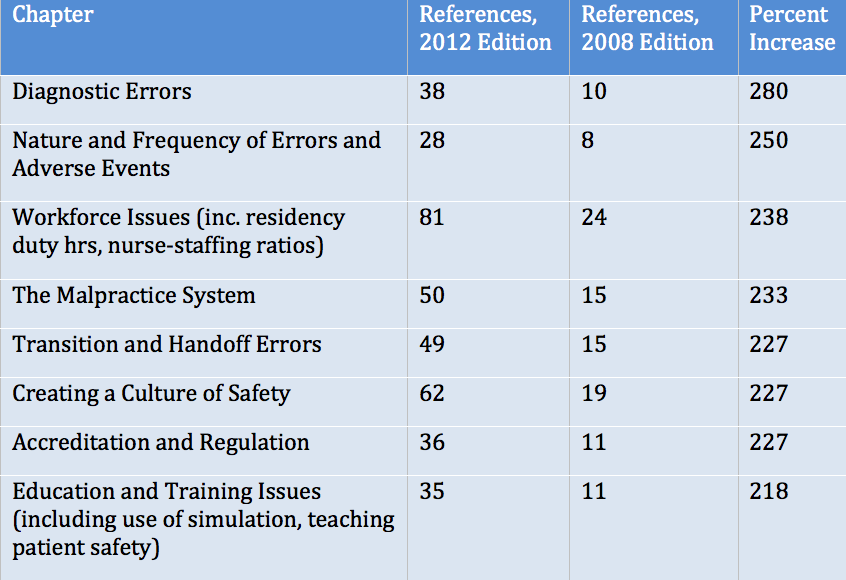

The field’s growth is mirrored by the expansion of the book itself. The first edition ran 256 pages, while the second edition is 412, a 61 percent increase. Ditto the references: the first edition had 423; the second, 1042. One gets a rough sense of where the action is in patient safety by looking at the chapters with the greatest increases in references (Table).

Reflecting back on what I learned in putting the new book together, here are just a few of the areas that have evolved the most dramatically in recent years:

Information technology: In the early days of the safety field, many people saw healthcare information technology (HIT) as the Holy Grail. Our naiveté – about the value of HIT and its ease of implementation – has been replaced by a much more realistic appreciation of the challenges of implementing HIT systems and harnessing them in the service of safety. Several huge HIT installations have failed (including one at my own hospital; we recently pulled the plug on our original system and installed today’s cool kid, Epic, which was just one of the pack five years ago). The U.S. government is doling out more than $20 billion to support HIT systems, a sum that would have been inconceivable in 2007 (the $700 billion stimulus bill, passed in 2009, provided a crawlspace in which these HIT incentives were hidden, provoking barely a peep about creeping socialism). The federal payments are leading to the hoped-for uptick in HIT installations.

As more hospitals and clinics become wired, we are beginning to appreciate the true safety benefits of HIT, as well as some of the unanticipated consequences and potential harms. In the latter category are issues that were barely on my radar screen in 2007: the mountains of work created for doctors once we can see all the information – and chaos – in one place (so much for the “efficiency gains” from HIT); the “copy and paste” phenomenon and the diminishing value of chart notes; and how HIT systems can separate us from our patients, as eloquently captured by Abraham Verghese in his description of “the iPatient.”

Measurement of safety, errors, and harm: In the early years, errors were our main target, and we focused on measuring, and decreasing, error rates. Today, we think more about measuring and attacking “harm” or “adverse events.” The Institute for Healthcare Improvement’s (IHI) Global Trigger Tool (GTT) – which permits a focused chart review looking for harm – has become increasingly popular, particularly as the limitations of other methods (clinician-generated incident reports, the AHRQ Patient Safety Indicators, standardized mortality ratios) have become more apparent. Three large studies (here, here, and here) have documented high rates of harm using the GTT; one particularly influential and disheartening study found no significant improvement in harm measures in North Carolina hospitals between 2003 and 2008.

The checklist: While I was prescient enough to include the concept of checklists in the  first edition, I wasn’t exactly Carnac the Magnificent: I used the term precisely once (page 22: “Errors in routine behaviors (‘slips’) can best be prevented by building in redundancies and cross checks, in the form of checklists, read backs… and other standardized safety procedures….”). The first edition’s cover – which features a host of

first edition, I wasn’t exactly Carnac the Magnificent: I used the term precisely once (page 22: “Errors in routine behaviors (‘slips’) can best be prevented by building in redundancies and cross checks, in the form of checklists, read backs… and other standardized safety procedures….”). The first edition’s cover – which features a host of  hazards: a pill bottle, a syringe, a scalpel and retractor, and an x-ray – reflects that era’s focus on errors.

hazards: a pill bottle, a syringe, a scalpel and retractor, and an x-ray – reflects that era’s focus on errors.

In the past few years, the success of checklist-based interventions in preventing central line–associated bloodstream infections and surgical complications, coupled with articles and books by respected safety leaders (particularly Drs. Pronovost and Gawande), has bestowed on the humble checklist superstar status in the safety field. Illustrating this transformation, I chose a picture of the WHO Safe Surgery checklist for the cover of the 2nd edition, and the term “checklist” is indexed 17 times, in seven different chapters. Importantly, Pronovost, Gawande, and others caution that checklists are not a magic bullet – they can fail when introduced without sufficient attention to matters of culture and leadership.

Safety targets: When the To Err is Human was published in 2000, few people discussed healthcare-association infections (HAIs) in the same breath as patient safety. I demonstrated my own mental silos by commissioning an article (by former CDC chief Julie Gerberding) in 2002 regarding what the safety field could learn from infection prevention. By the time of the first edition of Understanding Patient Safety, I had begun to recognize the overlap: there is a section on ventilator-associated pneumonia and another on central line-associated bloodstream infection. The second edition goes much further, with new content on several additional nosocomial infections, including urinary tract infections and MRSA.

Our embrace of HAIs has been driven by the fact that such infections are more easily measured and, in some cases, prevented than other kinds of harm. While this prioritization is natural, it risks having us give short shrift to other crucial targets that are less easily measured and fixed. The poster child for this selective inattention is diagnostic errors; I’m pleased that this common and serious category of hazards has attracted more attention in recent years, a trend that is reflected in the book.

Policy issues in patient safety: In the early years, much of the pressure to improve safety came from accreditors such as the Joint Commission, and from the media, local and regional collaborations, and nongovernmental organizations such as the IHI. In updating the book, I was struck by the emergence of a true business case for safety, driven by transparency initiatives, fines for serious cases of harm, “no pay for errors” policies, and now Value-based Purchasing – none of which were present (or if they were, they had training wheels on) in 2007. In the past few years, quality and patient safety have moved from being important-but-elective to being mission-critical. This is a stunning development, one that occurred at a pace far faster than I would have predicted.

Balancing “no blame” and accountability: As I mentioned in my recent post on James Reason and Swiss cheese, our early focus was on improving systems of care and creating a “no blame” culture. This focus was not only scientifically correct (based on what we know about errors in other industries) but also politically astute. Particularly for U.S. physicians – long conditioned to hearing the term “error” and reflexively thinking “medical malpractice” – the systems approach generated a level of goodwill and buy-in that would have been impossible with a more clinician-centered focus.

Perhaps the greatest change in my own thinking between 2007 and today is my greater appreciation of the need to balance a “no blame” approach (for the innocent slips and mistakes for which it is appropriate) with an accountability approach (including blame and penalties as needed) for caregivers who are habitually careless, disruptive, unmotivated, or fail to heed reasonable quality and safety rules. Fine-tuning this balance may well be the most challenging and important issue facing our field over the next 5-10 years.

* * *

One other emerging trend is our new appreciation of patient safety as a complex field – complex in the scientific, paradigmatic sense, as in “complexity theory” and “complex adaptive systems” (neither of which were mentioned in the first edition). But it’s also complex in the old fashioned sense: multidimensional, constantly evolving, and endlessly interesting. I was struck by all of this in writing the second edition of Understanding Patient Safety, and hope I captured some of the wonder and fascination in the new book.

Sold! I look forward to reading the second edition.

Ela será publicado em português (Brasil)?

I am new to the field, and have been struck by the almost complete absence of work on patient’s own fears about their safety while hospitalized. Inadequate control of (controllable), long wait times to get help when required, staff incompetence (which includes wrong/too much/too little meds), poor communication, mixups, possibly no one there when having a crisis, etc. are what patients fear, and there is very little connection between traditional patient safety topics and their fears.

I see these fears as having real consequences, for example a patient has a bad toileting experience and decides not to eat or drink the rest of their stay, leading to kidney or bladder issues.

Maybe the obvious, though almost unstudied, connection between these fears and patient satisfaction will raise their presence on investigators’ radar screens, since 30% of incentive payments will be tied to satisfaction scores starting in October.

This is a very intersting thought and very real. I do fear about hands of clinicians, surfaces and equipments being contaminated when I go for healthcare. Interesting field to explore!

Congratulations Bob. Happy for all of us that the new edition has been released.

Thanks, everyone.

For Paulo, the first edition was published in Portugese, and we are in early discussions with some of your Brazilian colleagues about publishing this edition as well. Espero que sim (I hope so).

Where can I get information on the behaviors doctors are displaying that would be accepted in the past, but now are getting them dismissed from many hospitals?

I’ll run for it, may be I can write a fair critic

May be this is the time for a spanish version too.

Congratulations Bob, glad to know you are alive & kicking!

This is a very helpful and timely update to the 2007 book. I work in the field of medical informatics and I am encouraging all staff who work in the hospital clinical informatics department to read this book. It provides a nice balance of referenced materials for experienced clinicians with summarization of key points for those exploring the topic for the first time.

Congrats on this accomplishment.

Todd Rowland

[…] Bob Wachter’s post this summer, The New and Improved “Understanding Patient Safety” and Evolution of the Safety Field, he states that the greatest change in his thinking over the past 5 years is the need to balance: […]

[…] Bob Wachter’s post this summer, The New and Improved “Understanding Patient Safety” and Evolution of the Safety Field, he states that the greatest change in his thinking over the past 5 years is the need to balance: […]